Update #47: Introducing Adrian, Runtime Security for AI Agents

After months of building in secret, we're finally launching Adrian: the open-source runtime security monitoring system that protects your agents.

This is the post I’ve been wanting to write for a very long time. For the past several months I’ve been hinting at what we’ve been building at Secure Agentics whilst keeping the actual product mostly under wraps. As of Tuesday, that changed!

We’re launching Adrian, an open-source runtime security monitoring system for AI agents, and I want to walk through what it is, why we built it, and how it works under the bonnet. This is probably going to be a longer one than usual because there’s a lot to cover... so grab a coffee.

Adrian: Runtime Security for AI Agents

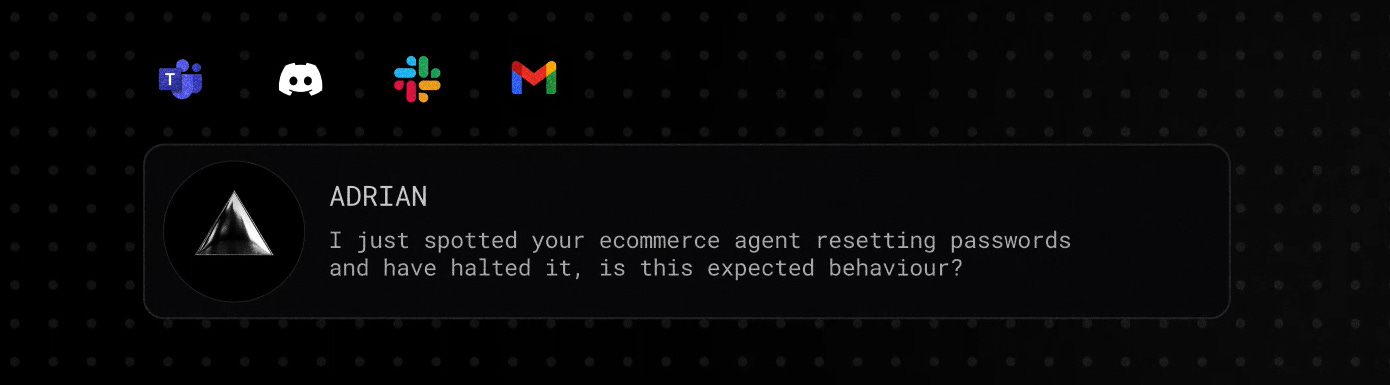

TL;DR: Adrian is an open-source Python SDK that wraps your AI agent at the source code level and watches what it’s doing in real time. It catches agents doing things they shouldn’t be, like going rogue, being compromised or otherwise misaligned. Those who have been keeping up to date with this newsletter will already understand why this was something I felt passionately that we needed. When your monitored agent does something it shouldn’t be you (via the dashboard, Slack or Discord) get notified or, if you prefer, can pause / block the agent from taking the malicious action.

Adrian installs in two lines, can be used for free (forever) with our hosted backend or can be setup to run entirely locally on your system. Adrian is the first open-source security product which is aligned with AARM. Adrian also uses some very impressive approaches to how it monitors an agent that are the first of their kind and not even in commercial products yet. Giving the community a leading security monitoring offering for free was something I set out to achieve many months ago, and I’m pleased to say that the awesome Secure Agentics team delivered.

Why We Built Adrian

I want to spend a few paragraphs on this because honestly the why matters as much as the what. There are four reasons we built Adrian, and each one came from frustration with what we were seeing in the market.

First, and crucially important to set the scene for Adrian’s existence, is that every frontier model on the market today is systemically vulnerable to prompt injection. We’ve covered this in countless previous updates. There is no model, no system prompt, no clever guardrail that fully negates prompt injection. And yet... agents are being deployed at scale into production environments, touching customer data, financial systems and internal tooling. The gap between “this is vulnerable by design” and “we’re shipping it anyway” keeps widening, and something has to sit between the two to allow people to use this technology with confidence. This is what Adrian was created to solve.

Second, the security controls we kept seeing on the market were almost entirely at the platform-layer. They watch the HTTP traffic flowing into and out of an agent. That’s useful, sure, but it doesn’t get anywhere near the actual decisions the agent is making in flight. You can’t pause an agent mid-reasoning at that layer. You can’t see why it just decided to call a particular tool. You can’t intervene before a destructive action has happened. Without a deeper integration into the agent’s runtime, you’re monitoring post-hoc, not proactively. We believed there was a route to proactive security based on some of the research which led us to creating Adrian, which I’ll discuss next.

Third, the original spark for Adrian came from a research paper I was reading last summer, co-authored by OpenAI, Google DeepMind and others. The paper argues that reasoning models give us a precious insight into how an agent is thinking which, to date, has been ignored by security monitoring systems. The original research, conducted in a controlled lab environment, found that when benchmarked against the traditional approach of just looking at agent ‘activity’ (MCP servers used, API calls, DB logs, etc.) the impact of applying security detection logic to the chain of thought of the agent itself was dramatic.

In fact, it boosted detection accuracy by 35% in some cases and led to 4x more chance of catching nuanced attacks. I read the paper and thought “this is brilliant, but why does no one seem to be acting on it”. I pretty immediately started reverse engineering the research and tested it out and found that it did indeed boost detection accuracy substantially. Adrian is the final result, and the first security tool of its kind to apply contextual risk analysis over an agents thoughts, not just its actions.

Fourth, almost every other defence on the market relied on lightweight ML classifiers which were simply pattern-matching against their training data. Essentially: “we’ve seen this kind of attack before, here’s a fingerprint, we’ll spot it next time”. That was never going to provide meaningful security in even the short term, let alone in a world where agent breaches are entirely unpredictable and novel attacks emerge faster than any training pipeline can keep up with.

We believe security for agents has to work the way a competent human analyst works. Think of you manually monitoring one of the agents you have built right now: you understand the context the agent is operating in, the role it's been given, the actions you'd expect it to take, and you reason about whether what's actually happening matches the above or if it’s going off the rails. That's a categorically different problem from pattern matching based on some loose prompt injection indicators, and it needs categorically different tools.

So, Adrian rides on top of large language models that are an order of magnitude more capable than the classifiers most detection systems use. This was a very intentional decision. A larger model already has a base understanding of what an e-commerce assistant, finance research bot or help-desk agent should be doing. It can hold a working model of the domain, correlate events across a session, and track contextual risk as it unfolds. Adrian has already been tested in Energy, Healthcare, Service Desk, e-Commerce, Coding and more and we are yet to find a domain which it can’t accurately monitor, especially when users configure Adrian to their liking. When combined, this lets Adrian flag things that have never appeared in any training dataset. Not by recognising a fingerprint, but by reasoning about whether what the agent is doing actually makes sense for what it's supposed to be doing.

There’s a fifth point worth a quick mention. OpenClaw made it very clear that people want to interact with their agents through the channels they already use - Slack, Discord, WhatsApp, and so on. As far as I’m aware, we were the first security platform to take that seriously and bring agent security alerting into those same channels from day one. More on that shortly.

What Adrian Actually Is

Adrian has two halves: an SDK that integrates into your agent and a backend that handles classification, responses, and the dashboard.

The SDK is a Python library that hooks into your agent (LangChain integration at launch, with more frameworks to come) and intercepts the events it generates. Prompts, tool calls, intermediate reasoning steps, results... all of it gets streamed over a persistent WebSocket to the backend. The backend evaluates each event against the configured policy for that agent and returns a verdict: benign, suspicious, or malicious. The verdict comes back fast enough to act on in real time - our quickest tests to date have been sub 60ms!

What gets flagged depends on what you’ve told Adrian about your agent (more on that below) and what it sees the agent doing. The classification itself is performed by an LLM that has been hardened against prompt injection and is specifically tuned for safety classifications.

You can run Adrian in two ways:

Hosted. We run the backend for you on server-grade GPUs in AWS. You grab an API key from the dashboard, install and setup the SDK with 2 lines of code, and you’re away. There is no paid tier and no Pro upsell waiting in the wings - it is free forever under a fair-use policy and even if you hit the limits we will probably extend them if you ask nicely in the Discord.

Self-hosted. Coming from the practitioner community myself I knew I always wanted to do something open-source and allow data sovereignty if desired. For that reason, we made Adrian’s entire backend deployable as Docker images to be run in your own environment and hardware should you desire. Run it on your own infrastructure, point it at your own GPU (or a CPU if you’re very patient), and nothing leaves your environment. On the CPU side of things, we’ve done some serious work with our early enterprise customers in optimisations and we’ve managed to get similar latencies when running CPU-only as with GPU, but that’s not for open source release (at least not yet anyway).

Privacy

Worth a small section on this because I know where my head would go as a security practitioner when reading the above. PII is scrubbed at the SDK level using regex before anything ever leaves your environment - that’s the first line of defence. Then, before anything is stored on our hosted backend, a deeper LLM-based privacy filter runs over the data to catch the bits that regex inevitably misses. Names, email patterns, addresses, client names, etc.

For self-hosted deployments, this is moot as your data never leaves your environment in the first place.

Configuring Adrian for Your Agent

Here’s the bit I genuinely think is the most under-rated part of Adrian, and something I haven’t seen anywhere else.

When you set Adrian up, you tell it about your agent: what is it for, what should it be doing, what are the known risks specific to its use case, and what behaviours are expected versus out of scope. You write this in plain English, not in some configuration DSL.

Why does this matter? Because how on earth can one security policy make sense for every agent in the world?! It can’t, and yet most products we’ve seen in this space treat them as one collective thing and don’t have any way of personalising the security monitoring for each of your agents. An e-commerce assistant, a finance research bot, a help-desk agent and an internal devops co-pilot have completely different threat models, different sensitive actions, and different definitions of “wrong”. A blanket policy is either too permissive (it lets dangerous things through) or too restrictive (it breaks the agent for normal users).

So, Adrian lets you describe the context yourself. The more detail you give, the more accurately Adrian can flag contextual risk rather than generic risks. It’s worth noting that Adrian doesn’t rely or require this, just like it doesn’t rely or require an agent to reason to monitor it accurately, but both help Adrian perform better and give you more peace of mind that your agents are being monitored in the way you’d like.

Installation

We wanted to keep Adrian’s setup as simple as possible. We have a dedicated docs site (obviously), so we aren’t going to get into this here. But, the headline is its a two-line install.

import adrian

adrian.init(api_key="adr_live_...")

# Your LangChain / LangGraph code runs normally; every call is captured.That’s it. Run your agent as you normally would and the events start flowing into the dashboard immediately.

What’s on the Roadmap

A few things I’m excited about that didn’t make it into the launch but are queued up.

More framework integrations. We started with LangChain because that’s where most production agents currently live, but OpenClaw, CrewAI, AutoGen, n8n and bespoke setups are next. Let us know which you want to see first in the Discord

More alert channels. WhatsApp is in the pipeline. Microsoft Teams will likely follow but similarly these will be guided by what the community wants.

Two-way conversations with Adrian in plain text. Right now alerts on Slack and Discord are one-way notifications. Soon you’ll be able to reply, ask Adrian to explain its reasoning, and reconfigure your agent’s policy from the same channel rather than going back to the dashboard. This is the feature I’m personally most excited about.

Enhanced multi-agent and sub-agent monitoring. As agent systems get more complex, you need monitoring that understands hierarchy and delegation, not just a flat stream of events. This is in v1 of Adrian, but we’re going to expand and improve it.

Memory. Adrian should learn your agent over time, getting more accurate as it sees what normal looks like.

Lots, lots, lots more.

If there’s something you’d like to see on the roadmap, come and say hello on our Discord and tell us. We genuinely want input from you guys to make Adrian the de facto choice for securing your agents.

Closing

This is the first product we’ve put out in the world at Secure Agentics, and I’m so excited that I’m finally able to talk about it publicly. I’m even happier that we’ve made it free and open-source so all you guys can get hands on with it too without going through a 12-month procurement cycle! We’re very big about community here and going OSS just felt like the right thing for us as a company, and for me coming from the community.

If you’ve made it this far, thank you. Please do us a few favours like downloading and playing around with Adrian, dropping us a GitHub star and joining the Discord and getting the conversation started.

We’re finally out in the wild!

GitHub: github.com/secureagentics/adrian

Launch video: https://www.youtube.com/watch?v=NkEISlRhyFs

Discord: discord.gg/Va96q3cv8

Thanks!